The Future of Human-Machine Teaming (HMT)

With the recent news that the Ukrainian army captured a Russian position using only ground robotic systems and unmanned aerial vehicles, alongside a “record-breaking” launch of an interceptor ‘Sting’ drone over 2,000 kilometres (1,240 miles), with the operator based in northern Ukraine, we decided to take a fresh look at the future of human machine teaming (4, 5).

What is unfolding on the battlefields of Ukraine is not just rapid innovation under pressure, but a fundamental shift in how wars will be fought and, more importantly, how humans and machines will collaborate in high-stakes environments. Increasingly, this collaboration is no longer theoretical. It is operational, iterative, and delivering measurable battlefield outcomes.

At its core, human-machine teaming (HMT) is about combining the cognitive strengths of humans with the speed, scale and precision of machines. Crucially, it is not about replacement, but amplification. As highlighted in multiple analyses of Ukraine’s battlefield innovation, the integration of AI-enabled systems with human operators is accelerating decision-making cycles and increasing operational tempo (3, 6).

From experimentation to operational realityUkraine has effectively become a live testbed for HMT. What might previously have taken decades of doctrinal development is now being compressed into months. According to recent reporting, unmanned aerial systems, ground robots and AI-enabled targeting tools are increasingly operating in coordinated networks, often with limited direct human control at the tactical level (3, 5).

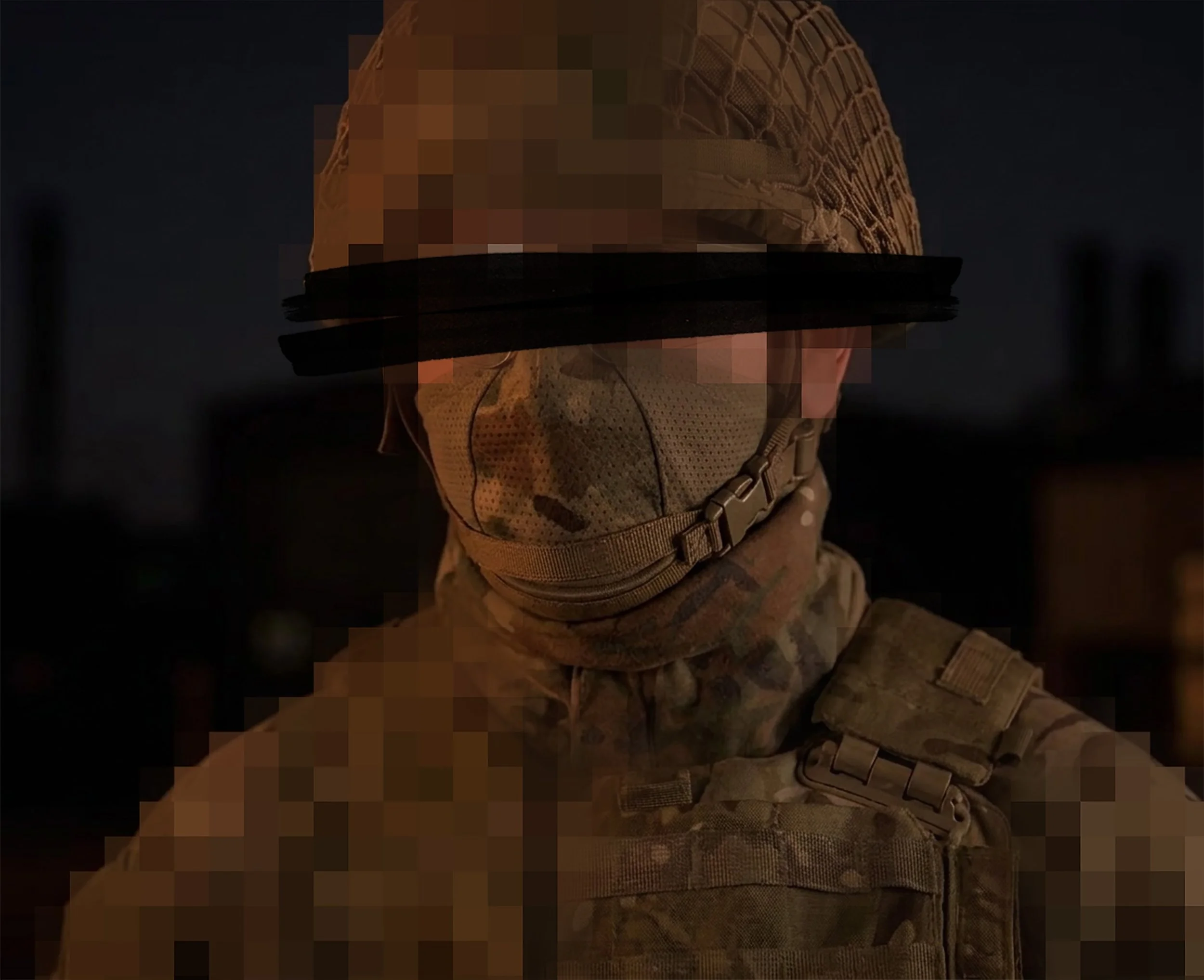

However, this does not signal the removal of humans from the loop. Instead, it reflects a shift in their role. Humans are setting objectives, interpreting ambiguity and making high-level decisions, while machines handle execution at speed and scale. This aligns with broader defence thinking that emphasises “human-on-the-loop” or “human-in-command” models rather than full autonomy (9, 10).

Distributed operations and the erosion of distanceOne of the most significant developments is the rise of distributed, networked operations. Drone swarms and remotely operated systems allow operators to engage targets from vast distances, reducing risk to personnel and increasing operational reach. The long-range deployment of interceptor drones from within Ukraine illustrates how physical proximity is becoming less relevant (5).

This shift has major implications for force structure. Traditional models built around mass and proximity are being supplemented by smaller, highly connected teams supported by autonomous systems. As noted in recent analysis, HMT enables a form of distributed lethality, where combat power is dispersed but still highly coordinated (1).

Kelvin Yeung, CTO at Aeris-UK, added:

“Ukraine’s use of semi-autonomous drones also suggests a shift toward more agentic forms of HMT. Rather than relying on constant human input, systems can increasingly execute parts of a mission within human-defined objectives, constraints and rules of engagement. The human role is not removed; it moves from direct control of every action toward supervision, intent-setting and intervention when needed” (11).

Trust as the critical enablerDespite these advances, one of the most persistent challenges in HMT is trust. For teams to function effectively, human operators must trust the outputs and behaviours of machine systems. This is not purely a technical issue. It is deeply human.

Research from the Alan Turing Institute highlights that trust is shaped by transparency, predictability and user understanding (2). If systems are perceived as opaque or unreliable, operators may ignore or override them, undermining their effectiveness.

Similarly, work from the Georgetown University Center for Security and Emerging Technology emphasises the importance of explainable AI and human-centred design in building confidence in machine systems (8). In practice, this means designing interfaces and workflows that align with human cognition, not just technical capability.

Ethics and meaningful human controlAs machines take on greater roles in sensing, targeting and even engagement, ethical considerations become unavoidable. The concept of “meaningful human control” has emerged as a guiding principle in Western defence policy, ensuring that humans retain accountability for critical decisions (10).

The UK government, for example, is actively investing in AI-enabled systems designed to detect explosive threats and protect personnel, while maintaining strict oversight and ethical safeguards (12). This reflects a broader recognition that technological advantage must be balanced with legal and moral responsibility.

Resilience in contested environmentsAnother defining feature of future HMT is resilience. These systems must operate in environments where communications are degraded, GPS is denied, and adversaries are actively attempting to disrupt operations.

This requires flexible autonomy. Systems must be capable of operating independently when disconnected, but seamlessly reintegrating with human operators when connectivity is restored. According to recent analysis, this adaptability will be critical in ensuring continuity of operations in contested domains (6).

Cybersecurity also becomes central in this context. As reliance on interconnected systems grows, so does the vulnerability to cyber attack. Protecting both the machines and the networks that link them will be essential to maintaining operational integrity.

Cyber Threat Intelligence Analyst, Piers Kontic-Coveney highlighted:

“The response being developed across several programmes is not to out-power jamming but to route around it vertically - pushing the command node above the jamming floor into high altitude platforms or low earth orbit constellations (6).

This works operationally, but it introduces a question the resilience framing doesn't fully address: if the decision loop now passes through a satellite constellation owned by a commercial provider operating under foreign law, the solution to jamming may inadvertently recreate the extraterritoriality problem at a different layer of the stack.

Sovereign space-based communications infrastructure is not an optional enhancement in this context. It is the architectural prerequisite for autonomous effects that remain legally and operationally accountable to the nation deploying them.”

Beyond the battlefieldWhile Ukraine provides the most visible example, the implications of HMT extend far beyond direct combat. In intelligence analysis, AI systems are already augmenting human analysts by processing vast datasets and identifying patterns that would be impossible to detect manually (2).

In logistics, autonomous systems and predictive analytics are optimising supply chains, reducing delays and improving resilience. Training is also evolving, with AI-driven simulations allowing personnel to train alongside machine teammates in realistic environments.

These developments reinforce a key point. HMT is not a niche capability. It is becoming a foundational element across defence functions.

Lessons for Western militariesUkraine’s experience offers several important lessons. First, innovation does not require perfect systems. Many of the capabilities being deployed are iterative, combining commercial off-the-shelf technologies with rapid battlefield adaptation (3).

Second, success depends as much on people and processes as on technology. Doctrine, training and organisational culture must evolve alongside new capabilities. Effective HMT requires a rethinking of how teams are structured, trained and led (9).

Finally, speed matters. The ability to rapidly integrate new technologies and adapt to changing conditions is becoming a decisive advantage.

The road aheadLooking forward, human-machine teaming will become more sophisticated and more deeply embedded in military operations. AI systems will increasingly act as collaborators, capable of anticipating needs, recommending actions and executing tasks within defined parameters.

The role of the human will continue to evolve, shifting towards supervision, decision-making and strategic oversight. However, this does not reduce human importance. On the contrary, it increases the need for clear judgement, ethical awareness and effective command.

The events in Ukraine have shown what is possible when humans and machines are effectively integrated. But they also highlight the complexity of doing so at scale.

Ultimately, the future of HMT will be shaped by the interplay of technology, trust and ethics. Those who can successfully balance these elements will not just gain an operational edge. They will redefine how military power is generated and applied in the 21st century.

Also By Us:

References

Atlantic Council. (2023). Battlefield applications for human-machine teaming. https://www.atlanticcouncil.org/wp-content/uploads/2023/08/Battlefield-Applications-for-HMT.pdf

Centre for Emerging Technology and Security (CETaS). (2022). Human-machine teaming and intelligence analysis. https://cetas.turing.ac.uk/sites/default/files/2022-12/cetas_research_report_-_hmt_and_intelligence_analysis_vfinal.pdf

Ifri. (2024). Mapping miltech in war: Eight lessons from Ukraine’s battlefield. https://www.ifri.org/en/studies/mapping-miltech-war-eight-lessons-ukraines-battlefield

Politico. (2026). Zelenskyy highlights robotic systems capturing Russian positions. https://www.politico.eu/article/volodymyr-zelenskyy-robotic-systems-russia-army-positions-ukraine/

CNN. (2026). Robots and drones reshape Ukraine battlefield. https://edition.cnn.com/2026/04/20/europe/robots-ukraine-battlefield-drones-intl-cmd

CSIS. (2024). Ukraine’s future vision and current capabilities for AI-enabled autonomous warfare. https://www.csis.org/analysis/ukraines-future-vision-and-current-capabilities-waging-ai-enabled-autonomous-warfare

BBC News. (2026). Ukraine war: The growing role of drones and robotics. https://www.bbc.co.uk/news/articles/c62662gzlp8o

Center for Security and Emerging Technology (CSET). (2023). Building trust in AI: A new era of human-machine teaming. https://cset.georgetown.edu/article/building-trust-in-ai-a-new-era-of-human-machine-teaming/

Royal United Services Institute (RUSI). (2024). Human-machine teaming. https://static.rusi.org/human-machine-teaming-sr-jan-2024.pdf

Thales Group. (2023). Human-machine teaming: Operational advantage through ethical AI. https://www.thalesgroup.com/en/news-centre/insights/united-kingdom/human-machine-teaming-operational-advantage-through-ethical-ai

The Soufan Center. (2025, August 6). Agentic AI: Does the future of warfare look autonomous? https://thesoufancenter.org/intelbrief-2025-august-6/

UK Ministry of Defence. (2025). AI-powered drones to detect explosive threats and protect military personnel. https://www.gov.uk/government/news/ai-powered-drones-to-detect-explosive-threats-and-protect-military-personnel